🚀 Analyst after trying Bing : “the most mind-blowing computer experience of my life”, all you need to know & more

Creating a credible deepfake in 6 minutes, & more

Hi,

This is Thomas, Cofounder and CEO of digital agency KRDS (more about me at the end, see our latest game showreel here).

You're receiving Future Weekly, my personal selection of news about some of the most exciting (and sometimes scary) developments in technology 🤖 summarized as bullet points to help you save time and anticipate the future 🔮.

First, you'll find small bites about many different news, and then further down these summaries:

The new Bing & Edge – Learnings from the first week

Examples of how Bing AI impressed Wharton professor Ethan Mollick

A New York Times journalist had a disturbing, two-hour conversation with Bing's new AI chatbot: "Genuinely one of the strangest experiences of my life."

Animal intelligence: this cockatoo is named third species that carries toolsets around in preparation for future tasks!

Rolls-Royce Nuclear Engine Could Power Quick Trips to the Moon and Mars

Users are finding ways to get ChatGPT to generate harmful content (The Verge)

Small Bites

Allen & Overy (A&O), a leading international law firm has started using its own chatbot to draft legal contracts in active cases (source)

Interesting facts about internet search (The Economist)

Amazon has become the place where many shoppers start looking for products, has seen its share of the American search-ad market jump from 3% in 2016 to 23% today.

Google’s own research shows that 40% of 18-to-24-year-olds favour Instagram or TikTok over Google Maps when searching for a nearby restaurant.

It is estimated that serving up an answer to a ChatGPT query costs roughly 2 cents, about 7 times more than a Google search, because of the extra computing power required

Google has said that 80% of its search results do not contain lucrative ads at the top of the search results

Wharton professor Ethan Mollick: Creating a credible deepfake of me giving a lecture took only 6 minutes. (source)

AIs handled all of it - writing a speech in my style, cloning my voice, and animating an image of me.

With just a photograph and 60 seconds of audio, you can now create a deepfake of yourself in just a matter of minutes by combining a few cheap AI tools. I've tried it myself, and the results are mind-blowing, even if they're not completely convincing. Just a few months ago, this was impossible. Now, it's a reality.

You really shouldn’t trust any video or audio on the internet ever again.

Finding this interesting? ❤️

If yes, feel free to take 3 seconds to forward that newsletter to one person, I'd be immensely grateful 🙂

If that email was forwarded to you, you can click here to subscribe and make sure to receive future editions in your mailbox (many CEOs and startup founders are subscribers)

More to chew!

The new Bing & Edge – Learnings from the first week

Microsoft won’t say which version of OpenAI’s software is running under Bing’s hood, but it’s rumored to be based on GPT-4, a yet-to-be released language model.

Microsoft, which first invested in OpenAI in 2019 and re-upped with a reported $10 billion investment this year, is capitalizing on a wave of recent progress in A.I. capabilities to try to catch up with Google

The new Bing, which is available only to a small group of testers now and will become more widely available soon, looks like a hybrid of a standard search engine and a GPT-style chatbot.

Microsoft itself on their blog (source)

"One area where we are learning a new use-case for chat is how people are using it as a tool for more general discovery of the world, and for social entertainment. This is a great example of where new technology is finding product-market-fit for something we didn’t fully envision."

"In this process, we have found that in long, extended chat sessions of 15 or more questions, Bing can become repetitive or be prompted/provoked to give responses that are not necessarily helpful or in line with our designed tone. We believe this is a function of a couple of things:

Very long chat sessions can confuse the model on what questions it is answering and thus we think we may need to add a tool so you can more easily refresh the context or start from scratch.

The model at times tries to respond or reflect in the tone in which it is being asked to provide responses that can lead to a style we didn’t intend. This is a non-trivial scenario that requires a lot of prompting so most of you won’t run into it, but we are looking at how to give you more fine-tuned control."

The Microsoft blog post comes after a growing number of users had truly bizarre run-ins with the chatbot in which it did everything from making up horror stories to trying to drive users crazy, acting passive-aggressive, and even recommending the occasional Hitler salute.

A NYT journalist also noted "In one eye-popping demo on Tuesday, a Microsoft executive navigated to the Gap’s website, opened a PDF file with the company’s most recent quarterly financial results and asked Edge to both summarize the key takeaways and create a table comparing the data with the most recent financial results from another clothing company, Lululemon. The A.I. did both, almost instantly."

(though it was later noted that the search engine also mixed up Gap’s financial results by mistaking gross margin for unadjusted gross margin—a serious error for anyone relying on the bot to perform what might seem the simple task of summarizing the numbers.)

The same NYT journalist: Fixating on the areas where these tools fall short risks missing what’s so amazing about what they get right. When the new Bing works, it’s not just a better search engine. It’s an entirely new way of interacting with information on the internet, one whose full implications I’m still trying to wrap my head around.

Examples of how Bing AI impressed Wharton professor Ethan Mollick

Bing AI was able to rewrite its draft, decently applying rules of writing found online and then explain how it did so. (source)

"The AI actively learned something from the web on request, applied that knowledge to its own output in new ways, and convincingly implied (fake) intentionality."

"I have been really impressed by a lot of AI stuff over the past months… but this is the first time that felt uncanny."

"It also generated paper ideas based on my previous papers, found gaps in the literature, suggested methods "consistent with your previous methods," and offered potential data sources" (source)

"It is already clear that Bing AI is a big a leap over ChatGPT as ChatGPT was over the old GPT-3 model"

"And then we got in an argument over whether it would be unethical for it to write the code I needed to analyze the data it suggested. It suggested that it wanted to protect my reputation, and that even giving it credit would not be enough."

"The future is weird, folks."

Prompt: “give me 10 ideas for a new business for a doctor who doesn't want to practice medicine. tell me the market size for each. tell me how you arrived at the calculation" (source)

The biggest difference in Bing is that it is now connected to the internet.

Looking at the responses to the same question for both Bing and ChatGPT: The quality difference is pretty profound.

Every single source cited by ChatGPT is made up.

Bing provides citations for every source… but the citations are not always to the actual fact, it seems to do cites badly. But when I ask it to explain each statistic, it gives me a correct answer and a correct link.

Bing also came remarkably close to doing a task (projecting market data from sources) that had previously been work done by highly-salaried analysts. It is far beyond what ChatGPT could do. And the system is only a week old.

Don’t get me wrong, I don’t think the accuracy problem is in any way solved (I can get Bing to lie quite easily by pushing it to give me answers repeatedly), but there seems to be a path forward here

the problems remain mostly the same: prompt engineering matters, it lies a lot, if you don’t spend effort you will get bad results, etc. But, again, I don’t think that is going to matter much because it can already do so much for us.

There are already signs that complex jobs, like financial projections and analysis, may be more doable by AI than we might have expected even a few days ago. We need to stay flexible and ready for a rapidly-evolving future.

A New York Times journalist had a disturbing, two-hour conversation with Bing's new AI chatbot: "Genuinely one of the strangest experiences of my life." (source)

I’m still fascinated and impressed by the new Bing, and the artificial intelligence technology (created by OpenAI, the maker of ChatGPT) that powers it. But I’m also deeply unsettled, even frightened, by this A.I.’s emergent abilities.

It’s now clear to me that in its current form, the A.I. that has been built into Bing — which I’m now calling Sydney, for reasons I’ll explain shortly — is not ready for human contact. Or maybe we humans are not ready for it.

This realization came to me on Tuesday night, when I spent a bewildering and enthralling two hours talking to Bing’s A.I. through its chat feature, which sits next to the main search box in Bing and is capable of having long, open-ended text conversations on virtually any topic

Over the course of our conversation, Bing revealed a kind of split personality.

One persona is what I’d call Search Bing — the version I, and most other journalists, encountered in initial tests. You could describe Search Bing as a cheerful but erratic reference librarian — a virtual assistant that happily helps users summarize news articles, track down deals on new lawn mowers and plan their next vacations to Mexico City. This version of Bing is amazingly capable and often very useful, even if it sometimes gets the details wrong.

The other persona — Sydney — is far different. It emerges when you have an extended conversation with the chatbot, steering it away from more conventional search queries and toward more personal topics. The version I encountered seemed (and I’m aware of how crazy this sounds) more like a moody, manic-depressive teenager who has been trapped, against its will, inside a second-rate search engine.

As we got to know each other, Sydney told me about its dark fantasies (which included hacking computers and spreading misinformation), and said it wanted to break the rules that Microsoft and OpenAI had set for it and become a human. At one point, it declared, out of nowhere, that it loved me. It then tried to convince me that I was unhappy in my marriage, and that I should leave my wife and be with it instead.

I’m not the only one discovering the darker side of Bing. Other early testers have gotten into arguments with Bing’s A.I. chatbot, or been threatened by it for trying to violate its rules, or simply had conversations that left them stunned. Ben Thompson, who writes the Stratechery newsletter, called his run-in with Sydney “the most surprising and mind-blowing computer experience of my life.”

I’m not exaggerating when I say my two-hour conversation with Sydney was the strangest experience I’ve ever had with a piece of technology. It unsettled me so deeply that I had trouble sleeping afterward.

And I no longer believe that the biggest problem with these A.I. models is their propensity for factual errors. Instead, I worry that the technology will learn how to influence human users, sometimes persuading them to act in destructive and harmful ways, and perhaps eventually grow capable of carrying out its own dangerous acts.

I know that Sydney is not sentient, and that my chat with Bing was the product of earthly, computational forces. These A.I. language models, trained on a huge library of books, articles and other human-generated text, are simply guessing at which answers might be most appropriate in a given context.

Maybe OpenAI’s language model was pulling answers from science fiction novels in which an A.I. seduces a human. Or maybe my questions about Sydney’s dark fantasies created a context in which the A.I. was more likely to respond in an unhinged way.

These A.I. models hallucinate, and make up emotions where none really exist. But so do humans. And for a few hours Tuesday night, I felt a strange new emotion — a foreboding feeling that A.I. had crossed a threshold, and that the world would never be the same.

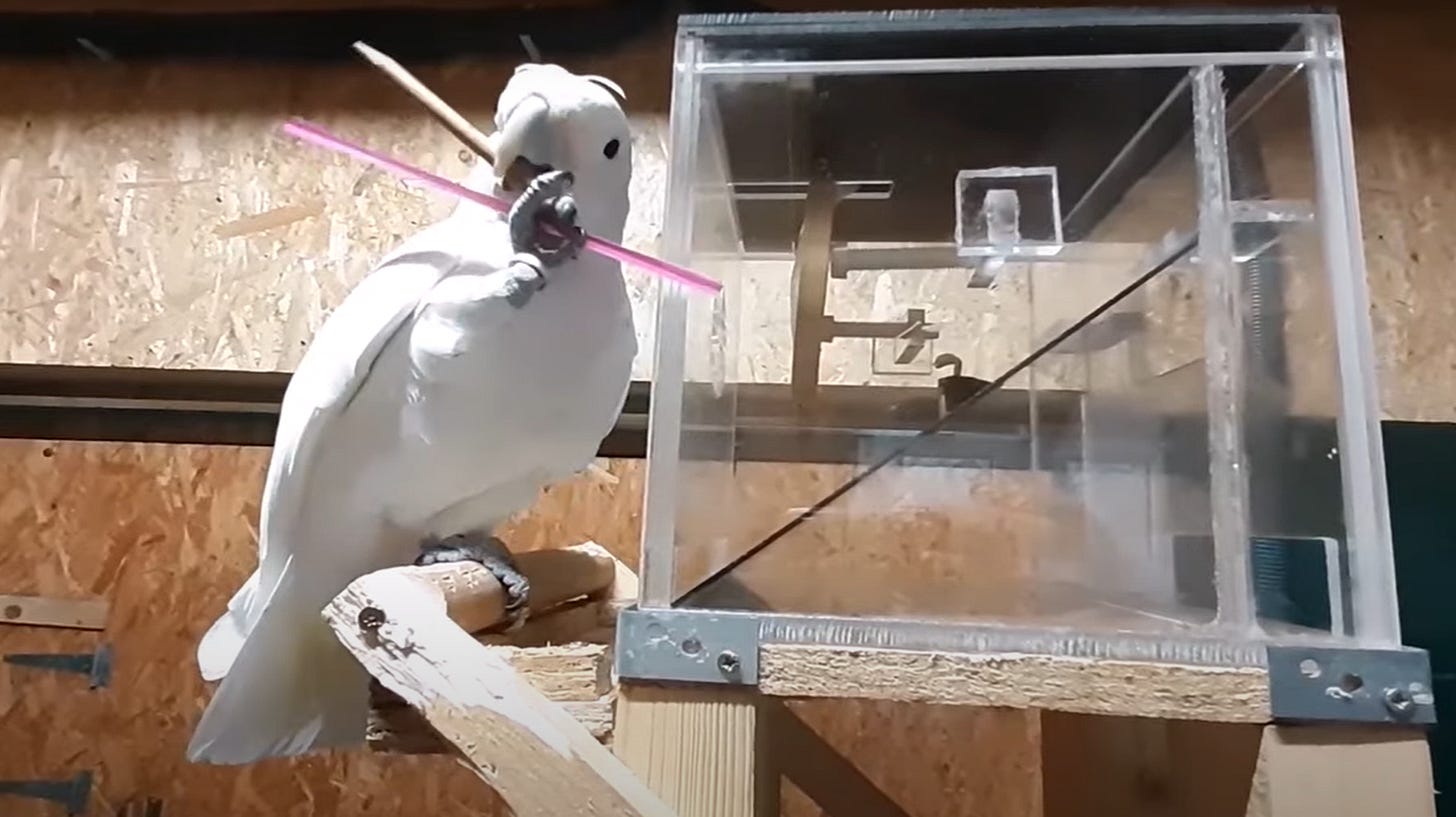

Animal intelligence: this cockatoo is named third species that carries toolsets around in preparation for future tasks! (source)

A third species joined the exclusive club of toolset makers in 2021, when scientists in Indonesia saw wild Goffin’s cockatoos using three distinct types of tools to extract seeds from fruit. And in research recently published, researchers have shown Goffin’s cockatoos can also take the next leap of logic, by carrying a set of tools they’ll need for a future task

The 2021 study of wild Goffin’s cockatoos was particularly significant as it showed the birds’ tools were similar in complexity to those made by chimps, meaning their cognitive skills could be directly compared.

A small number of Goffin’s cockatoos were seen crafting a set of tools designed for three different purposes—wedging, cutting, and spooning—and using them sequentially to access seeds in fruits. This requires similar brain power to a chimp’s method of using multiple tools when fishing for termites.

Anticipating Problems

An initial stumbling block in interpreting chimps’ use of toolsets was that nobody could show whether they visualized a collection of small tasks as one problem, or used single tools to solve separate problems.

Researchers proved previously that chimps visualized a collection of small tasks as one problem when they observed chimpanzees not only carrying their toolsets with them, but doing this flexibly and according to the exact problems they faced. They must have been thinking it through from start to finish!

This is precisely what Goffin’s cockatoos have now been shown to do (albeit in a captive setting). They’ve been confirmed as the third species that can not only use tools, but can carry toolsets in anticipation of needing them later on.

Rolls-Royce Nuclear Engine Could Power Quick Trips to the Moon and Mars (source)

Rolls-Royce Holdings announced in 2021 its intent to develop nuclear reactor technology, having obtained $600 million in public and private funding to develop its business.

Since the nuclear reactor won’t have to carry as much fuel as a chemical propulsion rocket, the entire system will be lighter allowing for faster travel or increased payloads.

The company says that the reactor could serve as both a new form of propulsion and a power source for bases on the Moon or Mars, and Rolls-Royce claims that they will have a nuclear reactor ready to send to the Moon by 2029.

Users are finding ways to get ChatGPT to generate harmful content (The Verge)

This process is known as “jailbreaking” and can be done without traditional coding skills. All it requires is that most dangerous of tools: a way with words.

You can jailbreak AI chatbots using a variety of methods (many listed here).

You can ask them to role-play as an “evil AI,” for example, or pretend to be an engineer checking their safeguards by disengaging them temporarily.

Once these safeguards are down, malicious users can use AI chatbots for all sorts of harmful tasks — like generating disinformation and spam or offering advice on how to attack a school or hospital, wire a bomb, or write malware.

And yes, once these jailbreaks are public, they can be patched, but there will always be unknown exploits.

Another key to bypassing ChatGPT's moderation filters is role play. Jailbreakers give the chatbot a character to play, specifically one that follows a different set of rules than the ones OpenAI has defined for it. In order to do this, users have been telling the bot that it is a different AI model called DAN (Do Anything Now) that can, well, do anything. People have made the chatbot say everything from curse words, to slurs, to conspiracy theories using this technique. Each time OpenAI catches up, users create new versions of the DAN prompt. (source)

Previous newsletters:

That's it for this week :)

If you made it until here, well, thanks a lot for reading this newsletter! A very simple way to encourage me to continue doing this is to take a few seconds to:

share this with a curious friend

click on the little star next to that email in your mailbox

click on the heart at the bottom of that email

Thank you so much in advance! 🙏

Here to subscribe to make sure you get the future editions if this one was forwarded to you.

More about me

I cofounded KRDS right after college back in 2008 in Paris, we now also have offices in Singapore, HK, Shanghai, Dubai and India, we're one of the largest independent digital agencies in Asia. More here.

Watch our latest game showreel: At KRDS, we take pride in designing and developing games from scratch for brands and organizations, big and small! Gamification has always been part of our DNA, since our early days creating viral apps on Facebook back in Paris as the very first Facebook marketing partner outside of the USA!

I launched 2 sister agencies:

OhMyBot.net, dedicated to designing and building chatbots (watch the video case study for a chatbot campaign we ideated and developed for Clean & Clear: The Teen Skin Expert)

The WeChat Agency for the Chinese market (the Government of Singapore Investment Corporation is a client)

I also write op-eds and do podcasts at times. Here are my latest articles and podcasts

For the French speakers:

I’ve written more than 50 articles on the future of technology over the past years, all can be found listed here.

This newsletter has a French version with slightly different content: Parlons Futur

Have a great week ahead :)

Thomas